Adaptive Learning of the Optimal Batch Size of SGD

Motasem Alfarra, Slavomir Hanzely, Alyazeed Albasyoni, Bernard Ghanem, Peter Richtàrik

January 2020

Abstract

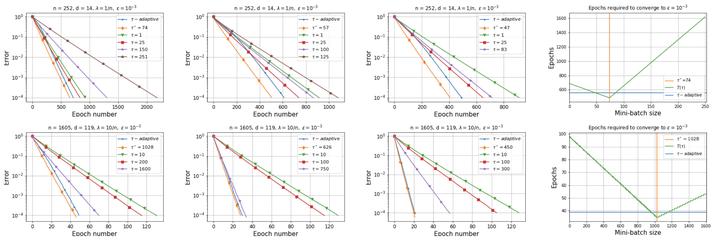

Recent advances in the theoretical understanding of SGD led to a formula for the optimal batch size minimizing the number of effective data passes, i.e., the number of iterations times the batch size. However, this formula is of no practical value as it depends on the knowledge of the variance of the stochastic gradients evaluated at the optimum. In this paper we design a practical SGD method capable of learning the optimal batch size adaptively throughout its iterations for strongly convex and smooth functions. Our method does this provably, and in our experiments with synthetic and real data robustly exhibits nearly optimal behaviour; that is, it works as if the optimal batch size was known a-priori. Further, we generalize our method to several new batch strategies not considered in the literature before, including a sampling suitable for distributed implementations.

Publication

In Workshop on Optimization for Machine Learning

Machine Learning Researcher at Qualcomm AI Research, Amsterdam, Netherlands

I am a research scientist at Qualcomm AI Research in Amsterdam, Netherlands. I obtained my Ph.D. in Electrical and Computer Engineering from KAUST in Saudi Arabia advised by Prof. Bernard Ghanem. I also obtained my M.Sc degree in Electrical Engineering from KAUST, and my undergraduate degree in Electrical Engineering from Kuwait University. I am interested in domain shifts, LLM safety, and how to combat them with test-time adaptation and continual learning. I helped co-organizing the first and second workshops on Test-Time Adaptation at CVPR2024 and ICML2025 and the ICLR2026 workshop on Monitoring ML Models Under Drift.